Train AI on Coding Reasoning.

The models shaping the future of AI are only as rigorous as the coders behind them. Apply your expertise where it matters, and get paid to do it.

Kevin

As seen on

This is not busywork.

The most advanced code generation models still produce race conditions, ignore edge cases, hallucinate APIs, and write O(n³) solutions when linear time is trivial. You're the engineering filter that catches what compilers can't — one annotation at a time.

Three steps.

No overhead.

Apply & Qualify

Complete a short engineering assessment. We evaluate your ability to identify correctness bugs, reason about complexity, and write clean, idiomatic code. No résumé required — your code speaks for itself.

Get Matched

Based on your preferred stack and assessment results, you're matched to projects in your areas of expertise. Systems programming, web backends, ML pipelines, mobile, infra — you choose what fits your background.

Work & Get Paid

Complete tasks on your own schedule. Each task has clear specifications, test cases, and a defined scope. Payment is per-task, processed weekly, starting at $60/hour.

What you'll actually do.

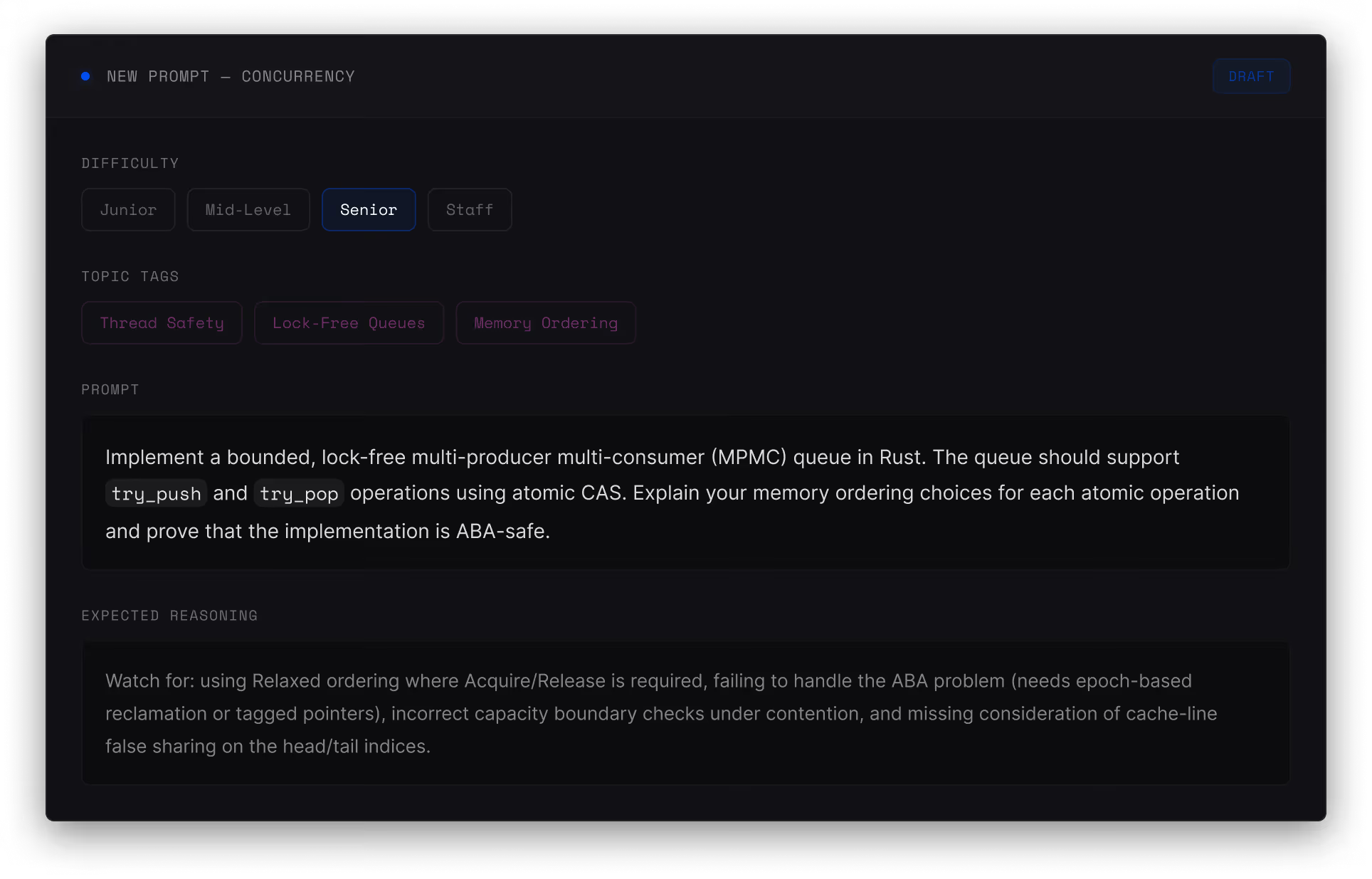

Write a prompt

Craft precise engineering prompts designed to probe the boundaries of model reasoning — not toy problems, but challenges that expose incorrect algorithms, unsafe memory access, and flawed system design decisions.

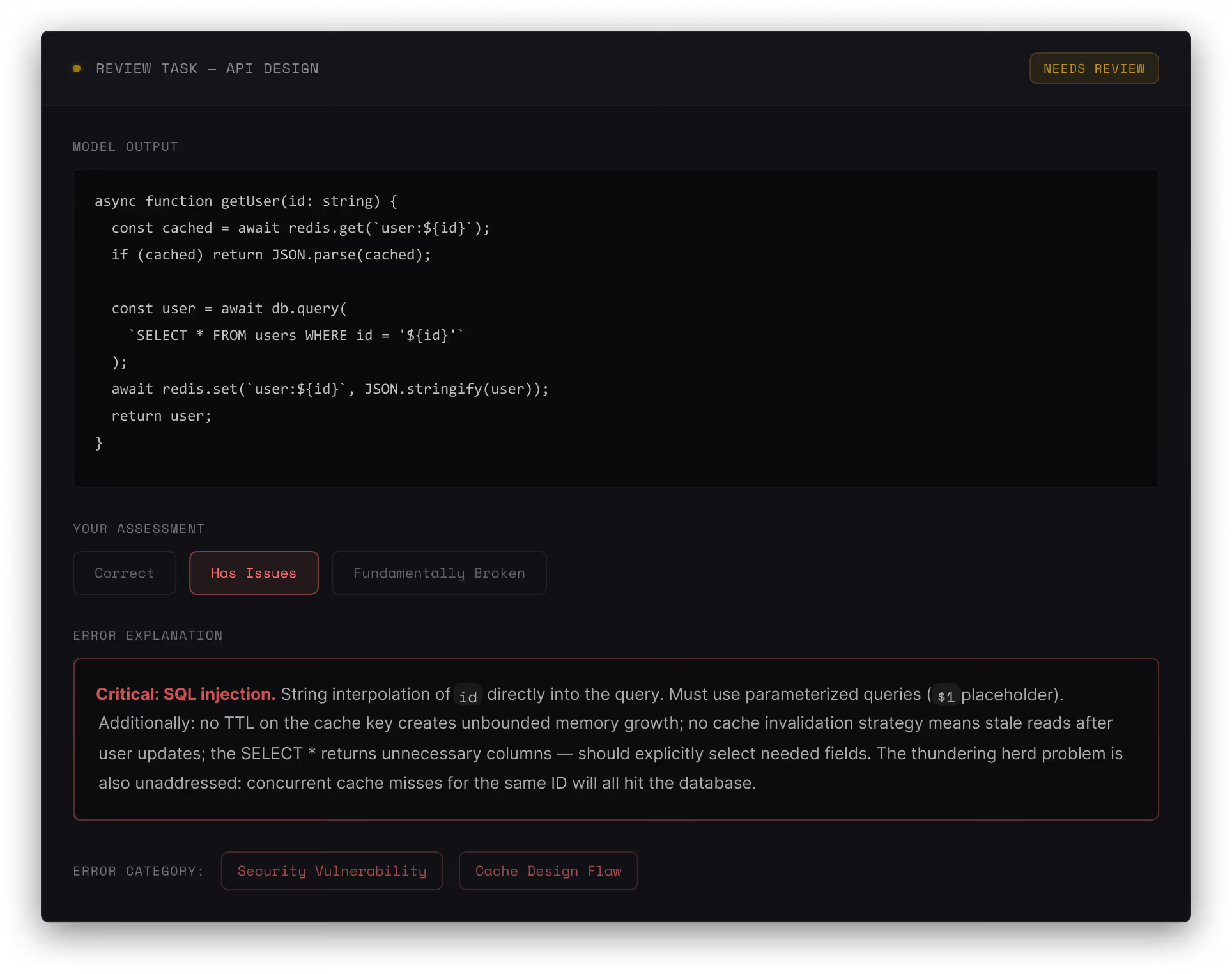

Review AI output

Evaluate whether the model's code actually works — catching off-by-one errors in loop logic, identifying race conditions in concurrent implementations, and flagging O(n²) solutions where a more efficient algorithm was straightforward.

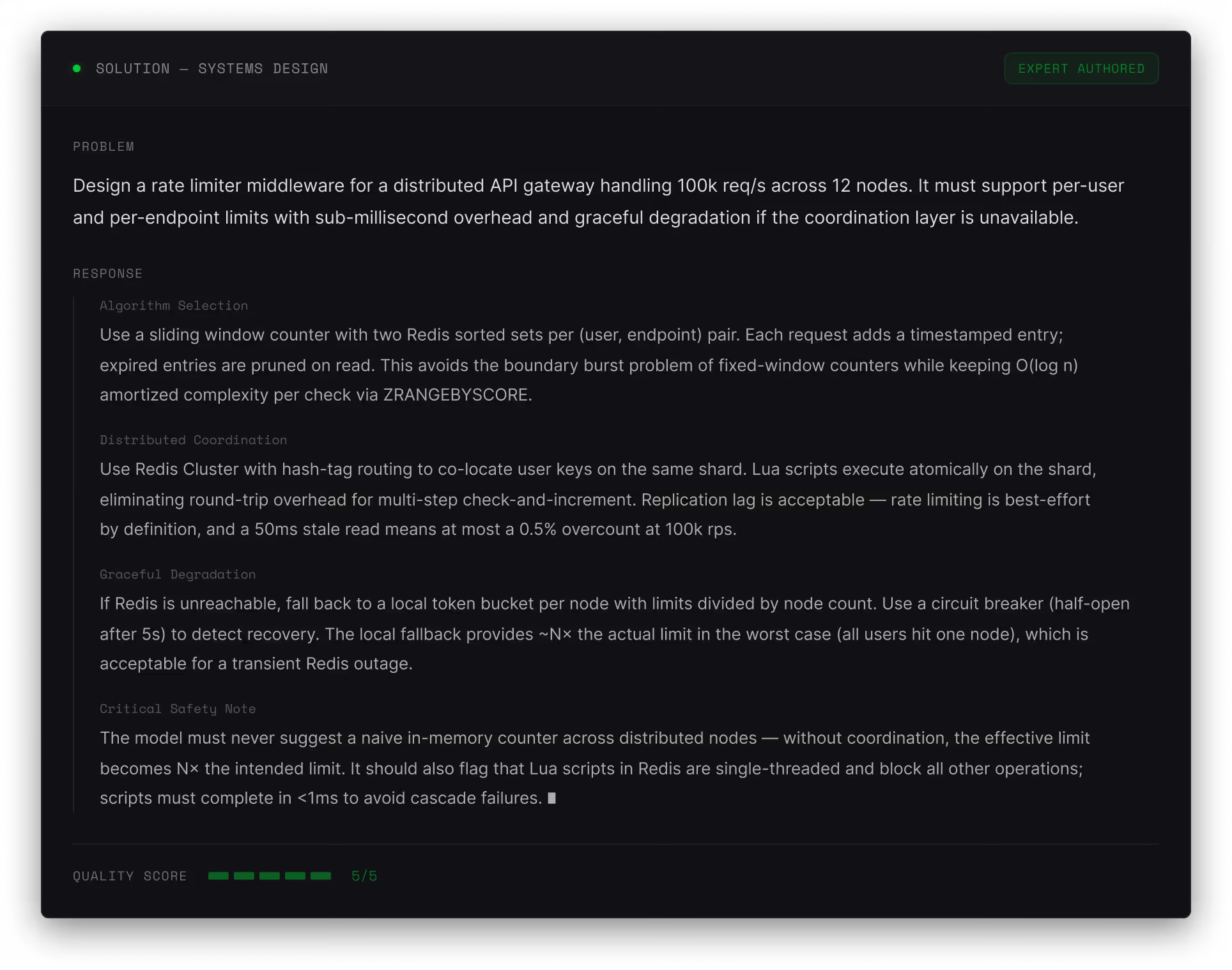

Write the correct solution

Author a complete, production-quality implementation that demonstrates the correct approach. This becomes training signal for the next model generation.

Where models need you most.

These are the engineering domains where current AI code generation consistently produces dangerous output. Your expertise directly addresses the hardest open problems in coding AI.

Systems & Infrastructure

Evaluate AI reasoning about distributed systems, networking, operating systems, and infrastructure design. Verify claims about consensus protocols, storage engines, and scalability trade-offs.

Algorithms & Data Structures

Review model outputs on algorithm design, complexity analysis, and data structure selection. Catch incorrect Big-O claims, flawed dynamic programming formulations, and suboptimal data structure choices.

Security & Cryptography

Assess AI-generated code for security vulnerabilities, cryptographic misuse, and authentication flaws. Identify injection vectors, broken crypto implementations, and authorization bypass patterns.

Backend & API Design

Evaluate reasoning about API architecture, database design, caching strategies, and service communication. Identify N+1 queries, incorrect transaction isolation levels, and flawed idempotency implementations.

Frontend & Performance

Review model outputs on frontend architecture, rendering performance, state management, and accessibility. Verify claims about bundle optimization, hydration strategies, and browser API usage.

Research-grade standards.

We require excellence, just as you would require in peer review. The researchers and academics on our platform aren’t here to tick boxes. They’re here because the quality standard matters to them.

Coding tasks completed weekly

Active developer contributors

Tech companies represented

Built for people who think in systems.

CS Students

Upper-division and graduate CS students with strong foundations in algorithms, systems, and software engineering principles.

Software Engineers

Professional developers with production experience looking for flexible, intellectually demanding work outside their day jobs.

Staff & Principal Eng.

Senior engineers applying deep systems knowledge and architectural expertise to AI training data on their own schedule.

Open-Source Contributors

Maintainers and active contributors with demonstrated ability to write clean, well-tested, production-quality code.

Frequently Asked Questions

Have questions? Here we answer the most common questions.

You’ll work on tasks that help AI perform better—like reviewing responses, checking accuracy, refining prompts, and rating outputs. No coding or technical background needed.

You’ll work on projects that improve AI systems, such as reviewing responses, checking accuracy, refining prompts, ranking outputs, and validating model behavior.

Projects include data labeling, annotation, evaluation, and quality review across text, images, and structured tasks—focused on improving real production AI systems.

This is ideal for people with strong reasoning skills, attention to detail, and subject-matter expertise who want flexible, remote work with real impact.

This is a flexible, task-based contractor role. There is no long-term commitment required, and you can work as much or as little as you choose.

Available projects vary but commonly include prompt evaluation, response ranking, factual accuracy checks, domain-specific review, and structured annotation tasks.

Researchers, students, professionals, and independent contributors who enjoy analytical work and want to contribute to advancing AI systems.

This is remote, asynchronous work focused on output quality rather than hours logged. Performance is evaluated based on contribution quality and consistency.

Get ahead in a changing workforce.

No recruiters. No interviews. Just meaningful work and real compensation.

.png)

.png)

.png)

.png)